Notes from: TDD Where did it all go wrong

-

Below are notes I took while watching Ian Coopers: TDD Where did it all go wrong

-

If we change the implementation details, none of our tests should break

-

We write more test code than implementation code? Why? This seems wrong

-

"Programmer Anarchy" - dev's hack code and throw it away after 1 year

-

Re-read the book Test-Driven Development By Example - Kent Beck

-

The test should be around the new Behaviour that you want to add

-

Express in your tests that we are testing a new behaviours in your system. Before you write the implementation, write down all of the new behaviours that you need to capture.

Testing outside in

- Don't make that outside your UX or Rest API

- Focus on testing your domain models

We should be testing the API (the surface of a module)

-

We should be testing things that are public. Not things that the internal or private

-

Don't use the internalsVisibleTo

-

As soon as you start testing tour internals, you are now coupling your tests to your implementation details. If you want to change your implementation details, then you will have to re-write your tests.

-

The surface area that you are testing should be much narrower than people are testing today.

-

This means that you will write less tests. This means that you will write tests against the use cases and those use cases are exposed by the external API

-

This means that when you refactor, That contract remains the same. The internals change, but you don't break the tests.

-

Quote from Dan North: "What behaviour will we need to produce the revised report? Put another way, what set of tests, when passed, will demonstrate the presence of code we are confident will compute the report correctly".

Testing Behaviours with example

- We should only be testing the external part of our domain. IE: Where our UX or REST API interacts with our customers

- The internal API is what we should be testing

What is a unit test?

- A test that runs in isolation from other tests

- It does NOT mean that you should only be testing a single class under test and have all other dependencies mocked out

- The test when it runs, it shouldn't have any side effects, meaning that it impacts other tests in the suite. It is the test the is isolated. Not the thing under test is isolated.

- By mocking all of the collaborators out, you are digging into implementation details and your tests become over specified. This is wrong because it knows far too much about your implementation and then as soon as you change your implementation all of the tests break because of all of these mocks. Don't do this.

- The only things that you should be mocking are the things that prevent your tests from being isolated. Not your class under test from being isolated.

Red Green Refactor

Green: Solving the problem

- Write sinful code to make it pass

- Go green as fast as possible and commit as sins as possible to go green

- So basically goto your code project and cut and paste some code to make it goto green

- We can't think about the problem and how to engineer it at the same time. We can generally think well about 1 or the other

- Just solve the problem. Just go away and make that test green.

- Write 20, 30, 60 lines of code at this point. Don't make it pretty just write code to get to solutions fast.

- You want to get to solutions fast.

- Kent Beck: "We can commit any number of sins to get there, because speed trumps design, just for that brief moment"

- The point is to get to the solution as fast as you can, so speed trumps design

- Kent Beck: "Good design at good times. Make it run, make it run right"

Refactor: This is where you do clean code

-

The refactoring step is when we product clean code

-

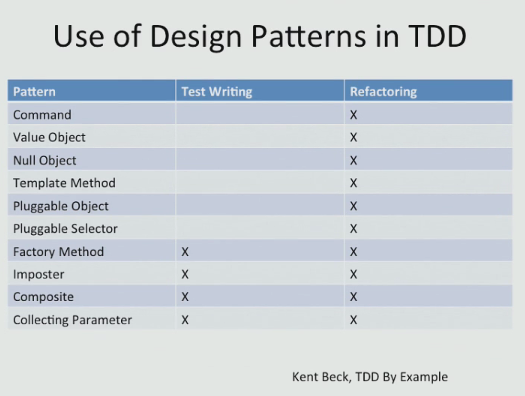

Apply Design Patters (see Joshua Kerievsky on Refactoring to Patterns

-

Remove Duplication

-

Sanitize the code smells

-

Look at SOLID. What am I violating -> fix these

-

Feature Envy, Shotgun Surgery -> fix these

-

Do not write new unit tests here because you are not introducing public classes

-

Use a code coverage tool. If the code coverage decreases it means that you added new code, such as an IF statement somewhere.

-

If you were to add new tests at this point, then you are coupling your tests to your implementation details, which is bad.

-

the API is your contract, the unchanging thing, and as soon as you write tests against the re-factoring opportunities, you bind your tests to your implementation details. For example, if you refactor, and add a new class to clean up the code. Don't write tests against this new class.

-

coupling is your biggest enemy

-

do not couple your tests to your implementation details

-

don't bake implementation details into tests!

-

Test behaviours, not implementations. This gives us the ability to change implementation details without changing tests. This is what we are looking for in TDD.

Use of Design Patters in TDD

Hexagonal Architecture: Ports and Adapters

Run Our Tests in Isolation

- We can't use real things like a database because then our tests are not isolated

Don't test what you don't own

Driving in Gears

- We are driving in 5th gear for most of our development

- Sometimes we go down to 4th gear

- Behavioural tests guide future programmers

- Feel free to delete tests that are coupled to implementation details if necessary

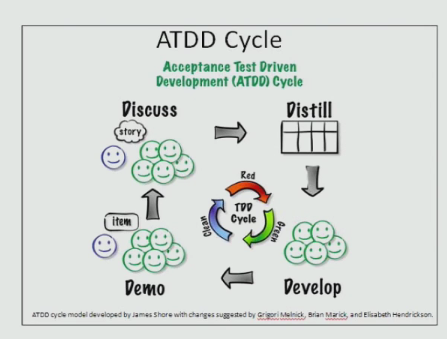

ATDD

-

Stop doing ATDD because customers don't seem to participate

-

Unit tests seem to devolve into programmer tests. Which we don't want.

-

Real Solution: Take the notion that we we want to be be testing behaviours but do so in unit tests

BDD

- Key issue is how we are writing our unit tests

Mocks

- Do not mock the internals

- Mock other ports and mock other publics

- Do not mock Adapters

- Mock your outgoing port to the adapter

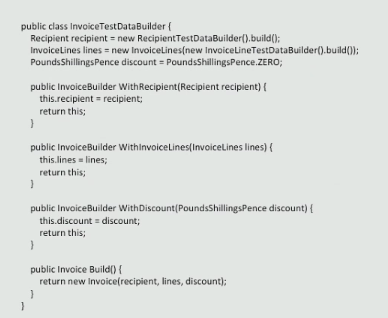

Use the builder Pattern for Building Well Constructed Objects for Tests

Summary

- The reason to test is a new behaviour, not a method on a class

- Write dirty code to get green, then refactor

- No new tests for refactored internals and privates (methods and classes)

- Both develop and accept against tests written on a port

- Add integration tests for coverage of ports and adapters

- Add system tests for end-to-end confidence

- Don't mock internals, privates, or adapters